For decades, enterprise data teams have assembled their analytics infrastructure piece by piece: an ETL server here, a data warehouse there, a BI platform in another corner, and a machine learning environment bolted on as an afterthought. Each tool has its own storage, its own security model, its own licensing contract, and its own team of specialists. The result is an expensive, fragile patchwork that spends more time moving data between systems than actually analyzing it.

Microsoft Fabric represents a fundamentally different approach. It is a unified analytics platform that brings data engineering, data science, real-time analytics, and business intelligence under a single roof, backed by a single storage layer. For enterprises paying seven-figure annual fees for SAS, Informatica, DataStage, or Teradata, Fabric is not just a technology upgrade — it is an architectural reset.

The Unified Analytics Promise

Microsoft Fabric brings together data engineering, data science, real-time analytics, and business intelligence under one platform. Unlike the patchwork of standalone tools most enterprises run today — separate ETL servers, data warehouses, BI platforms, and ML environments — Fabric provides a single, integrated experience built on OneLake.

This matters because the friction in modern data work is not in any one tool. The friction is in the seams between tools: the data copies, the format conversions, the permission synchronization, the metadata that gets lost every time data crosses a system boundary. Fabric eliminates those seams. A data engineer writes a Spark notebook that produces a Delta table in OneLake. A data analyst opens that same table in Power BI without an export, without a copy, without a new connection string. A data scientist trains a model against that same table in a Fabric notebook, and the results land right back in OneLake where everyone can see them.

The unified experience also means unified governance. One security model, one catalog, one lineage graph, one set of audit logs. For regulated industries — financial services, healthcare, government — this simplification is not a convenience. It is a compliance requirement.

Azure Fabric — enterprise migration powered by MigryX

OneLake: One Copy of Data

OneLake is Fabric's unified storage layer, built on Delta Lake format. Every Fabric experience — Data Factory, Spark, Warehouse, Real-Time Analytics, Power BI — reads from and writes to the same OneLake storage. This eliminates data silos, reduces storage costs, and ensures every team works from a single source of truth.

In traditional architectures, the same dataset often exists in five or six copies: the raw landing zone, the ETL staging area, the warehouse, the BI extract, the ML training snapshot, and the analyst's personal CSV. Each copy costs storage, risks drift, and complicates governance. OneLake collapses all of that into a single, open-format copy that every engine can access natively.

Because OneLake uses Delta Lake — an open-source storage format based on Parquet files with ACID transaction guarantees — data is not locked into a proprietary system. If an organization ever needs to read the same data from a non-Fabric tool, the files are standard Parquet. There is no export, no conversion, no vendor lock-in at the storage layer.

OneLake also provides automatic data cataloging. Every table, every column, every transformation is registered in the OneLake catalog with lineage metadata. This means governance teams can trace a Power BI metric all the way back through the Spark transformations to the original source system — without building a separate lineage tool.

MigryX: Idiomatic Code, Not Line-by-Line Translation

The difference between MigryX and manual migration is not just speed — it is code quality. MigryX generates idiomatic, platform-optimized code that leverages native features of your target platform. A SAS DATA step does not become a clunky row-by-row loop — it becomes a clean, vectorized DataFrame operation. A PROC SQL query does not become a literal translation — it becomes an optimized query that takes advantage of your platform’s pushdown capabilities.

The Six Fabric Experiences

Fabric is not a single product. It is six integrated experiences, each optimized for a specific workload, all sharing the same storage and security model:

- Data Factory. The orchestration engine. Data Factory provides a visual interface for building and scheduling data pipelines, with connectors to hundreds of source systems. It replaces standalone ETL tools like Informatica PowerCenter, IBM DataStage, and SSIS.

- Synapse Data Engineering. The Spark-based notebook environment. Data engineers write PySpark, Scala, or SQL to transform data at scale. It replaces SAS DATA step processing, custom Python scripts, and Hadoop-based ETL.

- Synapse Data Warehouse. A fully managed T-SQL warehouse. Analysts and engineers write standard SQL against Delta tables stored in OneLake. It replaces Teradata, Netezza, Oracle Exadata, and on-premise SQL Server data warehouses.

- Synapse Data Science. An integrated ML environment with experiment tracking, model registry, and MLflow integration. It replaces SAS Enterprise Miner, standalone Jupyter environments, and custom ML platforms.

- Real-Time Analytics. A KQL (Kusto Query Language) engine for streaming and time-series data. It handles IoT telemetry, application logs, and event-driven analytics that traditional batch platforms cannot address.

- Power BI. The visualization and reporting layer, now deeply embedded in Fabric. Power BI reads directly from OneLake without data movement, enabling real-time dashboards on top of warehouse and lakehouse tables.

Each experience runs on shared compute capacity but with engine-specific optimizations. A Spark notebook gets Spark executors. A warehouse query gets a SQL optimizer. Power BI gets its own rendering engine. The key insight is that all of these engines share the same data — no copies, no syncs, no glue code.

MigryX precision parser — Deep AST-level analysis ensures every construct is understood before conversion begins

Platform-Specific Optimization by MigryX

MigryX maintains deep knowledge of every target platform’s strengths and best practices. When converting to Snowflake, it leverages Snowpark and native SQL functions. When targeting Databricks, it uses PySpark DataFrame operations optimized for distributed execution. When generating dbt models, it follows dbt best practices for modularity and testability. This platform awareness is what makes MigryX output production-ready from day one.

Why Legacy Platforms Can't Compete

Traditional analytics architectures require separate licensing, separate administration, and manual data movement between tools. An enterprise running SAS for statistical analysis, Informatica for ETL, Teradata for warehousing, and Tableau for visualization is paying four vendors, managing four security models, and maintaining a fleet of custom scripts to shuttle data between them.

Fabric eliminates the glue code, the data copies, and the integration complexity. For organizations paying seven figures in SAS, Informatica, or DataStage licensing, Fabric represents both a capability upgrade and a cost reduction. The per-capacity pricing model means teams pay for compute when they use it, not for named users or permanent server licenses.

The skills gap is equally significant. The market for SAS programmers is shrinking. The market for Python, SQL, and Spark engineers is growing. Fabric's native support for PySpark, T-SQL, and Python notebooks means organizations can hire from a much larger talent pool — and the engineers they hire will have transferable skills rather than vendor-specific certifications.

Perhaps most importantly, Fabric's Copilot AI capabilities — built directly into notebooks, warehouse queries, and Data Factory pipelines — provide intelligent assistance that legacy platforms simply cannot match. Auto-generated code suggestions, natural-language query interfaces, and automated documentation are not bolt-on features. They are native to the platform.

Migration Challenges Are Real

Moving to Fabric is not just "rewrite in SQL." Legacy SAS programs, DataStage jobs, and Informatica mappings encode complex business logic that requires careful translation. Macro systems, custom functions, and implicit type coercions all need explicit handling.

Consider a typical SAS estate of 5,000 programs. Those programs contain tens of thousands of PROC steps, hundreds of macro libraries, custom format catalogs, and implicit behaviors — like how SAS handles missing values differently from SQL NULL, or how the SAS DATA step's implicit loop processes rows one at a time while Spark operates on entire DataFrames. Every one of these differences must be identified, translated, and validated.

DataStage and Informatica jobs present their own challenges. Visual ETL designs stored in proprietary metadata repositories must be parsed, understood, and reconstructed as Data Factory pipelines or Spark notebooks. Parallel execution semantics, partitioning strategies, and error-handling behaviors all differ between the source and target platforms.

Manual migration at this scale is prohibitively slow. A team of ten engineers manually converting five programs per week would take nearly twenty years to migrate 5,000 programs. Automated conversion is not optional — it is the only viable approach.

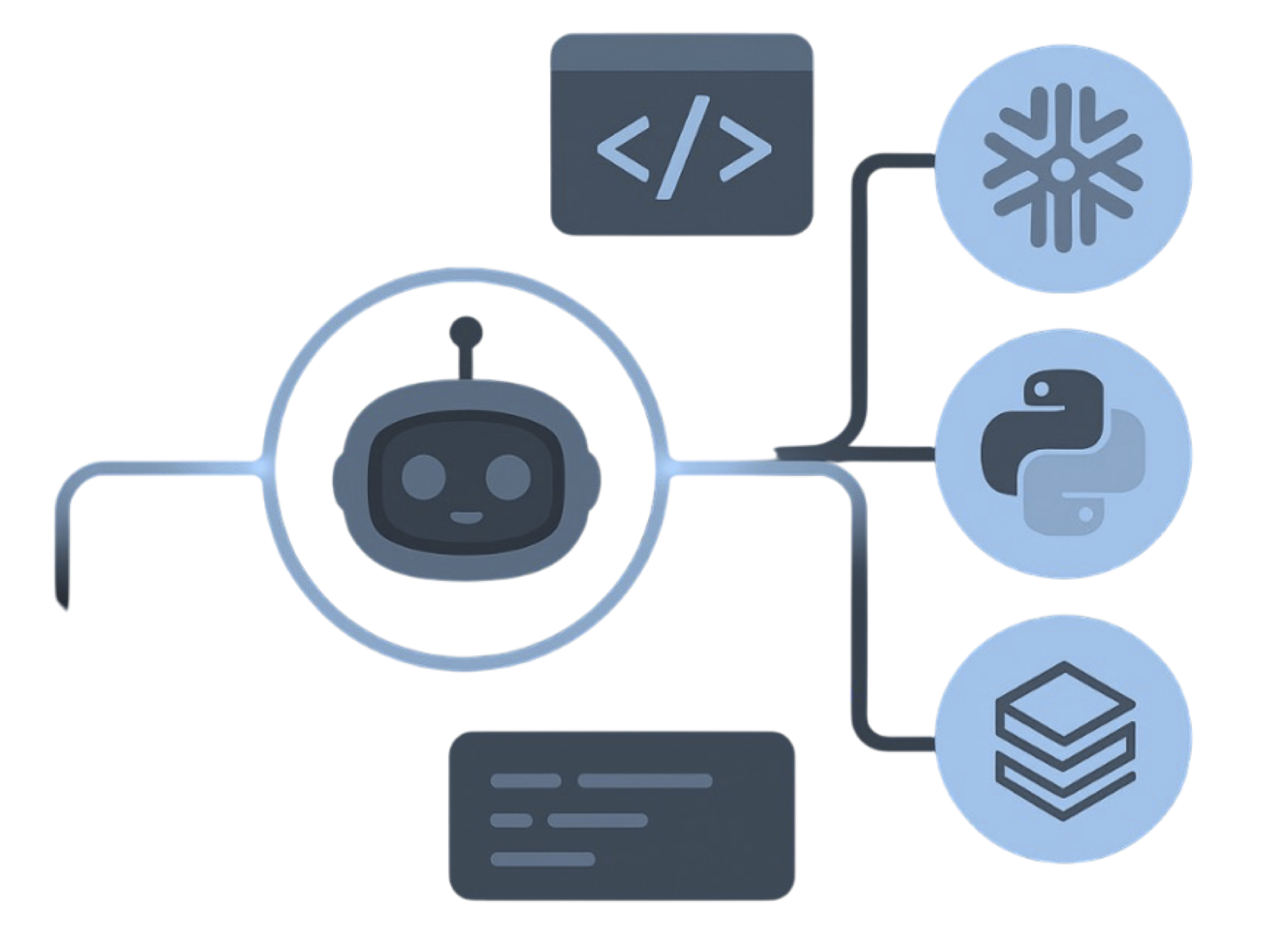

How MigryX Targets Fabric Natively

MigryX is purpose-built for exactly this challenge. Its custom parsers understand every source dialect deeply — SAS macro language, Informatica XML mappings, DataStage OSH, Teradata BTEQ, Oracle PL/SQL — and generate Fabric-native output that leverages the full platform.

For compute-heavy transformations, MigryX generates Spark Notebooks with optimized PySpark code that runs in Synapse Data Engineering. For analytical queries and reporting logic, it generates Data Warehouse SQL targeting Synapse Data Warehouse's T-SQL engine. For orchestration and scheduling, it produces Data Factory pipelines with proper dependency chains, error handling, and retry logic.

Critically, MigryX does not stop at code generation. After conversion, it automatically registers OneLake catalog entries with column-level lineage — mapping every source column through every transformation step to the target Fabric table column. This means governance teams have full lineage from day one, not as a follow-up project months after migration.

MigryX Fabric Output

MigryX generates production-ready Fabric Spark Notebooks, Data Warehouse SQL, and Data Factory pipelines from any legacy source — with full column-level lineage registered in OneLake catalog.

The result is not a rough translation that requires weeks of manual refinement. It is production-ready Fabric code with proper error handling, logging, parameterization, and governance metadata — ready for validation and deployment.

For enterprises evaluating their next analytics platform, the question is no longer whether to move to a unified architecture. The question is how quickly you can get there without breaking the business logic that your organization depends on. Fabric provides the platform. MigryX provides the bridge.

Why MigryX Delivers Superior Migration Results

The challenges described throughout this article are exactly what MigryX was built to solve. Here is how MigryX transforms this process:

- Production-ready output: MigryX generates code that passes code review and runs in production — not prototype-quality output that needs weeks of cleanup.

- Platform optimization: Converted code leverages target platform-specific features for maximum performance and cost efficiency.

- 25+ source technologies: Whether migrating from SAS, Informatica, DataStage, SSIS, or any of 25+ legacy technologies, MigryX handles it.

- Automated documentation: Every conversion decision is documented with before/after code mappings and transformation rationale.

MigryX combines precision AST parsing with Merlin AI to deliver 99% accurate, production-ready migration — turning what used to be a multi-year manual effort into a streamlined, validated process. See it in action.

Ready to migrate to Azure Fabric?

See how MigryX automates Fabric migration with precision, speed, and trust.

Schedule a Demo